Due to the lack of GPU kernel support for certain types of matrix multiplication (e.g. We should be aware of the gap between theoretical optimal quantization strategy and the hardware kernel support. QAT is able to attain better performance but requires extra computation resources and access to representative training data.

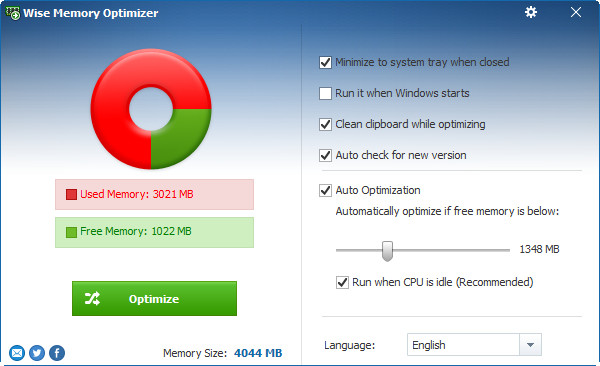

Quantization-Aware Training (QAT): Quantization is applied during pre-training or further fine-tuning.It is usually quite cheap to implement, in comparison to training. Post-Training Quantization (PTQ): A model is first trained to convergence and then we convert its weights to lower precision without more training.There are two common approaches for applying quantization on a deep neural network: Let’s denote the logits layer right before softmax as $\mathbf$ in the case of BERT) and a special cosine embedding loss to align the hidden state vectors between teacher and student.ĭistillation can be easily combined with quantization, pruning or sparsification techniques, where the teacher model is the original full-precision, dense model and the student is quantized, pruned, or trimmed to have higher sparsity level. Usually a neural network has a softmax layer For example, a LLM outputs a probability distribution over tokens. Given a dataset, a student model is trained to mimic outputs of a teacher via distillation loss. The generic framework of teacher-student knowledge distillation training. There is no much restriction on how the student architecture should be constructed, except for a matched output space with the teacher in order to construct a proper learning objective. 2020) is a straightforward way to build a smaller, cheaper model ( “student model”) to speed up inference by transferring skills from a pre-trained expensive model ( “teacher model”) into the student. Knowledge Distillation ( KD Hinton et al. This post focuses on network compression techniques and architecture-specific improvement for transformer models. Many architectural changes, especially those for attention layers, help with transformer decoding speed.Ĭheck the previous post on large model training on different types of training parallelism and memory saving designs including CPU memory offloading. Improvement specific to a target model architecture.A model of smaller size, in terms of parameter count or bitwidth, should demand less memory and run faster. Network compression techniques, such as pruning, quantization, distillation.EffectiveTransformer packs consecutive sequences together to remove padding within one batch. This helps with memory usage but causes higher latency. Memory offloading to offload temporarily unused data to the CPU and read them back when needed later.

Smart parallelism of model components and data makes it possible to run a model of trillions of parameters. Apply various parallelism to scale up the model across a large number of GPUs.Several methods can be used to make inference cheaper in memory or/and faster in time. Reduce the inference latency and make things run faster.Reduce the desired computation complexity by lowering the number of FLOPs needed.Reduce the memory footprint of the model by using fewer GPU devices and less GPU memory.We in general consider the following as goals for model inference optimization: Some are general network compression methods, while others are specific to transformer architecture. In this post, we will look into several approaches for making transformer inference more efficient. Inference generation is executed in an autoregressive fashion, making the decoding process hard to parallel. Inference cost from the attention mechanism scales quadratically with input sequence length.For a batch size of 512 and context length of 2048, the KV cache totals 3TB, that is 3x the model size (!). The KV cache should be stored in memory during decoding time E.g.Both model parameters and intermediate states are needed in memory at inference time.

Why is it hard to run inference for large transformer models? Besides the increasing size of SoTA models, there are two main factors contributing to the inference challenge ( Pope et al. The extremely high inference cost, in both time and memory, is a big bottleneck for adopting a powerful transformer for solving real-world tasks at scale. They are powerful but very expensive to train and use. Large transformer models are mainstream nowadays, creating SoTA results for a variety of tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed